Why 2026 Is the Inflection Point for Healthcare Voice AI

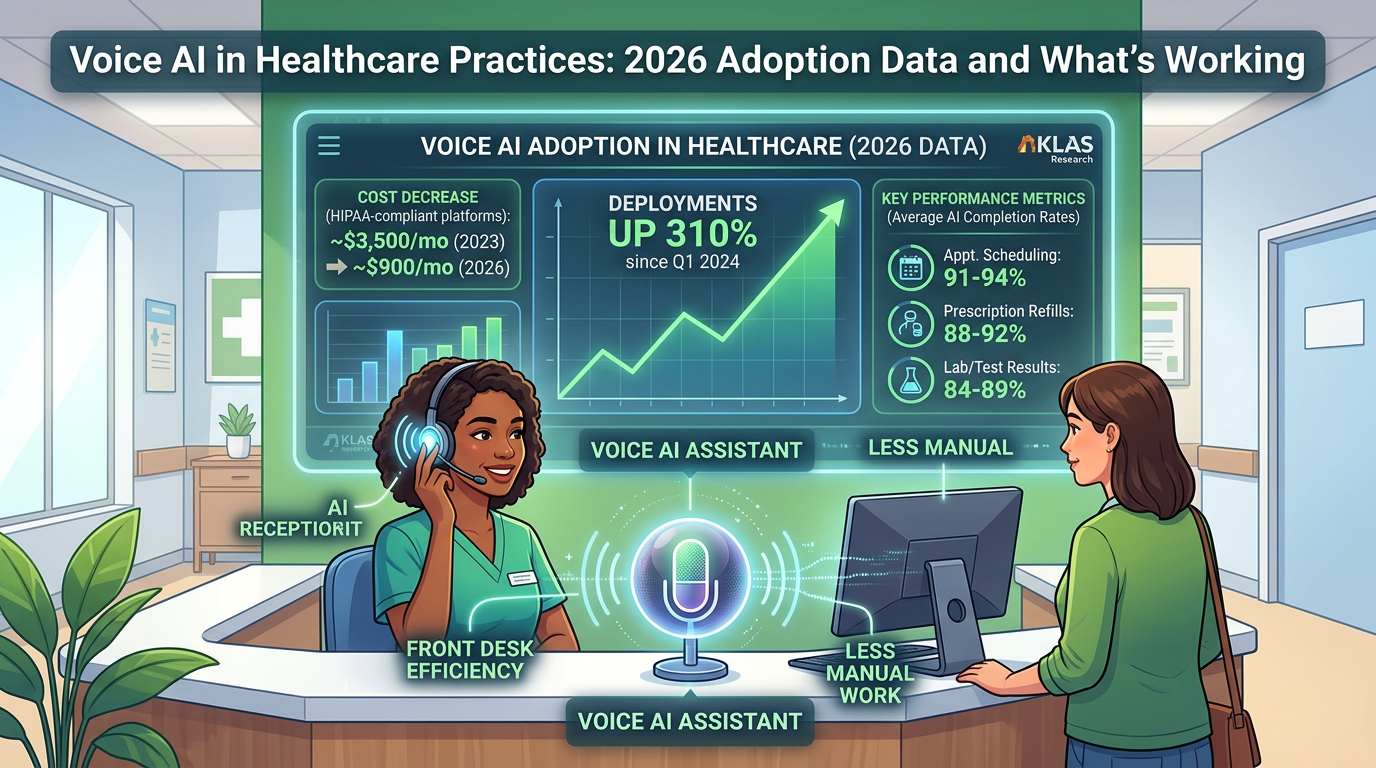

The healthcare front desk has been an operational liability for decades — high call volume, chronic understaffing, and the same ten task categories handled manually on repeat. Voice AI has been positioned as the fix since at least 2022. What's different in 2026 is the economics. HIPAA-compliant voice AI platforms that ran $3,500–5,000/month in 2023 are available today for $600–1,200/month, driven by infrastructure commoditization and competitive pressure. That pricing shift extended the addressable market from large health systems down to single-physician practices and small group clinics.

The result: voice AI deployments in clinical settings have grown approximately 310% since Q1 2024, according to healthcare IT survey data from KLAS Research and independent tracking by healthcare technology consultancies. But faster adoption has come with a higher failure rate. An estimated 28% of new healthcare voice AI deployments are abandoned within 90 days — almost always for the same identifiable reasons. Growth and maturity are different things, and the gap between practices doing this well and practices wasting money on it is measurable.

Where Healthcare Practices Are Actually Deploying Voice AI

The highest-performing implementations in 2026 are concentrated in a specific category: high-volume, low-ambiguity interactions. These are calls where the decision path is finite, the data required is structured, and success can be measured objectively. The table below shows where practices are deploying and what AI call completion rates look like across the most common use cases.

Use Case

Typical Share of Call Volume

AI Completion Rate

Primary Failure Point

Appointment scheduling

35–45%

91–94%

Complex reschedules, multi-provider requests

Prescription refill status

15–20%

88–92%

Multi-drug queries, clinical clarifications

Lab/test result inquiries

10–15%

84–89%

Results requiring clinical interpretation

Prior auth status checks

5–8%

78–83%

Non-standard insurance workflows

Billing inquiries

8–12%

58–65%

Disputes, complex plan questions

General clinical questions

10–15%

41–52%

Ambiguous symptom queries, liability exposure

The pattern is clear: where the conversation path is bounded, AI completion rates run 88–94%. Where the path opens up — billing disputes, clinical questions, emotionally sensitive calls — completion rates fall below 65% and patient satisfaction scores drop sharply. Practices that fail tend to expand scope too fast, routing judgment-required calls to the AI after an early win with scheduling.

One emerging use case gaining significant traction in 2026 is outbound prior authorization follow-up. Rather than replacing inbound call handling, AI handles the tedious work of calling insurance lines to check auth status — navigating hold queues and phone trees so clinical staff don't have to. Specialty practices report reclaiming 6–10 staff hours per week on this task alone, with near-zero patient-facing risk since patients aren't involved in those calls.

Adoption Rates by Specialty: The Uneven Landscape

Voice AI adoption is not uniform across healthcare. Call volume patterns and patient population dynamics vary enough by specialty that the ROI case — and the risk profile — look very different depending on the practice type.

The behavioral health data point is worth holding onto as a general principle: voice AI adoption rates correlate inversely with the emotional complexity of the patient population. That's not a technology limitation — it's a deployment decision. The technology could technically handle the call; the question is whether it should.

Running the Real ROI Calculation

Vendor materials on voice AI ROI tend to go vague at the exact moment specifics matter. Here's a grounded calculation for a mid-size primary care practice: 3 physicians, 2 nurse practitioners, 2 full-time front desk staff handling ~220 calls per day.

Scenario

Calls Handled by AI

Monthly Labor Savings

After-Hours Revenue Recovery

Platform Cost

Net Monthly Benefit

Conservative (scheduling only, business hours)

96/day (44%)

$1,920

$0

$800

$1,120

Realistic (scheduling + refills + after-hours)

143/day (65%)

$2,860

$3,850

$900

$5,810

Full deployment (+ prior auth + EHR integration)

165/day (75%)

$3,300

$5,600

$1,100

$7,800

Assumptions: Front desk staff at $20/hr; avg AI-handled call saves 3.2 minutes of staff time; after-hours captures 30 previously missed calls/day, 35% are appointment requests, 60% convert; avg appointment value $175.

The after-hours component is where the economics shift most dramatically. Industry data indicates the average practice misses 18–22% of inbound calls during business hours and nearly all calls outside business hours. A voice AI system running 24/7 captures a meaningful share of that missed volume — and for a primary care practice, each recovered appointment has a downstream lifetime value well beyond the $175 visit fee. Typical breakeven timeline: 2–3 months at the realistic scenario, 6–8 months at the conservative one.

The Three Barriers That Actually Kill Implementations

1. HIPAA compliance anxiety (cited by 63% of non-adopters). The concern is legitimate in principle and largely resolved in practice. Modern voice AI platforms built for healthcare offer Business Associate Agreements (BAAs), HIPAA-compliant call recording storage with configurable retention policies, and documented PHI handling protocols. The gap is mostly a perception problem, not a technical one — but practices evaluating platforms should still verify BAA availability, data residency, and breach notification procedures before signing anything.

2. EHR integration gaps. A voice AI system that can schedule an appointment verbally but can't write it to the practice's scheduling system creates reconciliation work that offsets most of the efficiency gain. This barrier is real. Most major EHR platforms — Epic, Athena, eClinicalWorks, Kareo — have documented APIs and certified integration partners, but the integration work requires 4–6 weeks of configuration. Practices that skip this phase and plan to reconcile manually consistently abandon the system faster than practices that invest the setup time upfront.

3. Staff resistance from poor change management. Front desk staff who perceive voice AI as a prelude to their own elimination tend to implement it poorly, skip training, and frame it negatively to patients. The practices with the highest retention rates for voice AI systems have done two things: been explicit with staff that the AI handles administrative volume so that staff can focus on higher-value interactions, and involved front desk staff in the configuration and QA process. Making staff co-owners of the implementation rather than subjects of it changes the outcome substantially.

A Practical Implementation Framework

Practices that succeed in 2026 share a recognizable pattern. It's not complex, but it's disciplined:

The Bottom Line for Practice Operators in 2026

Voice AI in healthcare has cleared the experimental phase. The compliance infrastructure is in place, the EHR integration pathways exist, and two years of deployment data have produced a clear implementation playbook. The 28% failure rate is real, but it's concentrated almost entirely in practices that skip the scope-definition step or try to automate use cases the technology isn't suited for yet. That's a process failure, not a technology failure.

For any practice operator evaluating this right now, the most valuable question isn't which platform to choose. It's: what percentage of our actual call volume fits a bounded, automatable path? Get an honest answer to that — ideally by auditing real call logs — and the implementation decision becomes straightforward. The practices that start with that question are the ones not in the 28%.

Specialists in AI front desk implementation, like the team at Epiphany Dynamics, can help practices run that audit before committing to a platform — removing the most common source of misaligned expectations from the start.