The Gap Between "Works in a Demo" and "Works on the Phone"

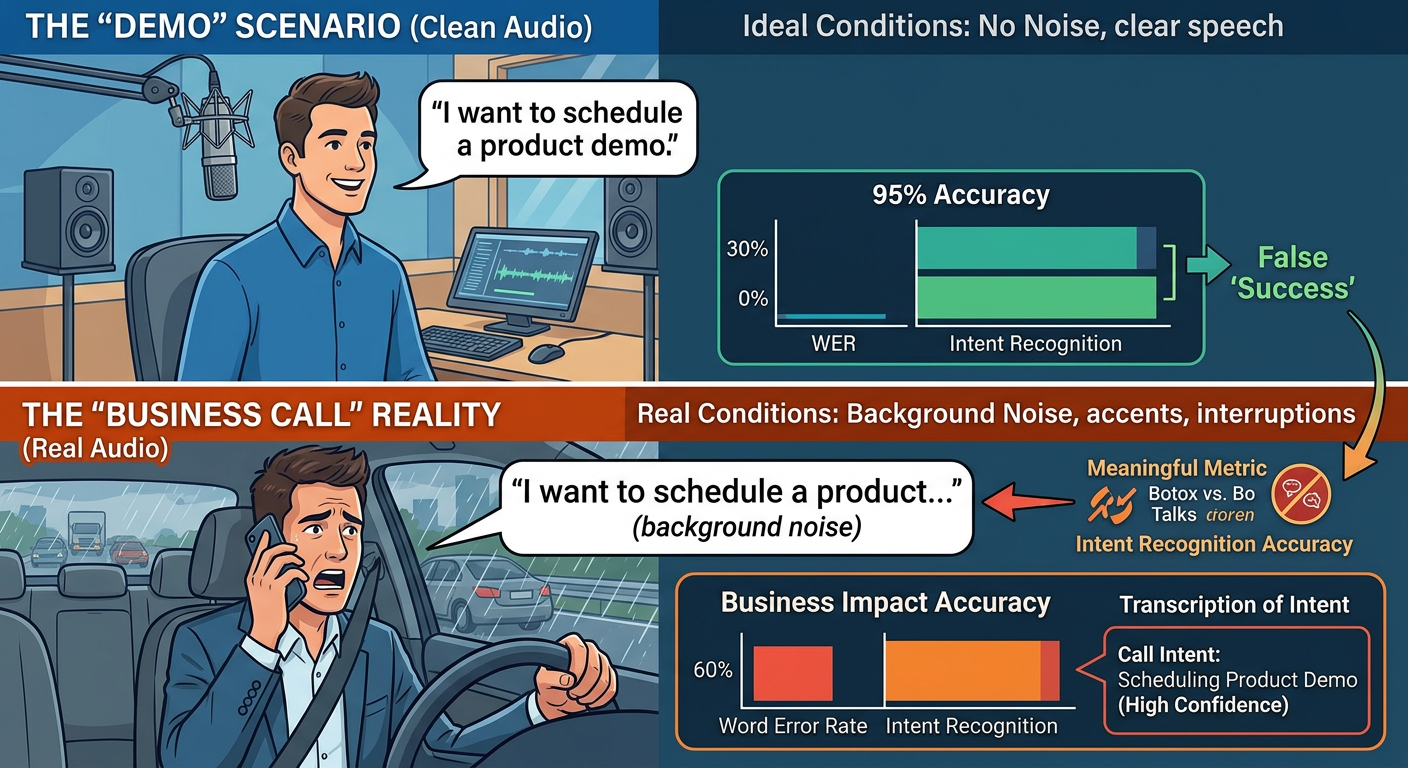

A voice AI demo is almost always impressive. Clean audio, deliberate speech, simple questions. Then you deploy it on actual inbound business calls — a patient calling from a noisy parking lot, a customer with a strong regional accent asking about a service that isn't in your FAQ — and suddenly that 95% accuracy claim feels like a lie. It isn't a lie, exactly. It's just measured under conditions that have nothing to do with how your customers actually call you.

Voice AI accuracy has genuinely improved at a remarkable pace. The word error rate (WER) for top commercial speech-to-text engines dropped from roughly 20–25% in 2016 to under 5% on standard benchmarks by 2024. OpenAI's Whisper model achieves a WER of approximately 2.7% on clean English audio. That's a measurable, meaningful leap. But WER alone is a misleading metric for businesses. What actually matters is whether your AI can handle a real conversation — incomplete sentences, interruptions, industry jargon, and a caller who just said "the third Tuesday of next month" instead of giving you a date. This article breaks down what accuracy actually means for business voice AI, what's driving the improvements, and how to measure whether those improvements are paying off in your operation.

The Metrics That Actually Matter for Business Calls

Word Error Rate measures what percentage of individual words a speech model transcribes incorrectly. It's a useful engineering benchmark, but a poor proxy for business performance. A WER of 8% might mean your AI misheard "botox" as "bo talks" — a recoverable error. Or it might mean it missed "cancel" entirely — a much costlier one. For business purposes, four metrics matter far more than WER alone.

Intent Recognition Accuracy

This measures whether the AI correctly identified why the caller is calling, regardless of transcription perfection. Modern natural language understanding (NLU) models can often infer correct intent even with transcription errors, because context carries meaning. Industry benchmarks from conversational AI platforms like Twilio, Deepgram, and Cognigy suggest that well-trained domain-specific voice bots achieve 88–94% intent recognition accuracy on in-scope calls. Generic, out-of-the-box deployments typically land in the 70–80% range.

Task Completion Rate (TCR)

TCR measures whether the caller actually accomplished what they called to do — without needing a human agent. This is the closest metric to business value. A well-optimized medical spa booking bot, for example, should complete 75–85% of appointment requests without escalation. Anything below 65% means you're spending more on the AI than you're saving in staff time. Anything above 85% is where real cost reduction begins to compound.

First-Call Resolution and Escalation Rate

These two metrics sit at the intersection of accuracy and business outcomes. High escalation rates (callers being transferred to a human) usually signal either accuracy failures (the AI couldn't understand the caller) or scope failures (the call type wasn't covered in training). A properly deployed voice AI should see escalation rates drop by 15–30% after the first 90 days as call patterns are captured and the model is fine-tuned against real call data.

Why Business Calls Are Harder Than Consumer Use Cases

Voice AI systems trained on podcast transcripts and clean conversational audio perform well in controlled settings. Business calls introduce a cascade of additional complexity that most benchmark datasets don't include. Understanding this gap is the first step toward closing it.

Domain vocabulary: A dermatology clinic uses terms like "fractional resurfacing," "dermal filler units," and "PDO threads." A plumbing company gets calls about "PRV replacement" and "sewer lateral scoping." Generic models aren't trained on these terms and will either mishear them or assign incorrect intent. Domain fine-tuning — feeding the model real call transcripts and service terminology — can lift intent recognition accuracy by 12–20 percentage points in specialty verticals.

Environmental noise: Consumer voice AI runs on quiet smartphone microphones. Business callers phone from cars, warehouses, job sites, and waiting rooms. Real-world signal-to-noise ratios degrade transcription accuracy significantly. Modern acoustic enhancement layers (used by platforms like Krisp and NVIDIA Riva) can recover 3–6% WER improvement just from pre-processing the audio before it hits the speech model.

Speaker variability and accents: The United States alone has 30+ regionally distinct accent clusters. Older voice AI systems showed WER spikes of 30–40% on non-standard American accents. Multi-accent training datasets and acoustic model ensembles have reduced this gap substantially — but deployment without accent-aware testing is still a common failure point for small business deployments.

The Technical Advances Driving Real Accuracy Gains

Three architectural shifts explain most of the accuracy gains businesses are seeing from voice AI systems deployed in 2024–2026 compared to systems from three or four years ago.

Transformer-Based End-to-End Models

Traditional speech recognition used a pipeline of separate models: acoustic model → language model → post-processing. Transformer-based end-to-end models (like Whisper and its derivatives) compress this pipeline, reducing compounding error. The language model component now attends to the full audio context rather than word-by-word probability chains. The practical result is better handling of long utterances, corrections mid-sentence, and contextual disambiguation — all common in real business calls.

Real-Time Speaker Adaptation

Early voice AI reset context on every turn. Modern deployed systems use session-level speaker embeddings to adapt to individual callers within the first 3–5 seconds of a call. If a caller has a thick accent or speaks unusually fast, the model weight adjusts for that speaker during the session. Platforms like AssemblyAI and Deepgram have demonstrated 4–8% WER improvement from real-time adaptation versus static models on diverse caller populations.

Retrieval-Augmented Response Grounding

Accuracy in a business context isn't just transcription — it's also whether the AI gives the right answer. Retrieval-augmented generation (RAG) grounded in business-specific knowledge bases (service menus, pricing, policies, FAQs) dramatically reduces hallucination and incorrect responses. For businesses with more than 20 distinct services or variable pricing, RAG-grounded voice AI is no longer optional — it's the difference between a useful system and a liability.

A 4-Layer Framework for Accuracy Improvement

Improving voice AI accuracy on business calls isn't a single-step fix. It's a stack, and each layer builds on the one below it. Here's the framework used by enterprise deployments — applicable at any scale.

Layer

What It Covers

Key Action

Expected Impact

Layer 1: Audio Quality

Microphone input, noise, codec

Add noise suppression pre-processing

3–6% WER improvement

Layer 2: Transcription Model

Speech-to-text accuracy

Domain fine-tune on real call transcripts

10–20% intent lift

Layer 3: Intent & Context

NLU, multi-turn context retention

Custom intent taxonomy + slot filling

15–25% TCR improvement

Layer 4: Response Grounding

Factual accuracy of answers given

RAG against business knowledge base

Hallucination rate <2%

The common mistake is optimizing Layer 2 (buying a better ASR engine) while ignoring Layer 1 (bad audio input) and Layer 3 (weak intent taxonomy). A 1% WER improvement from a premium transcription model is completely erased by poor audio quality or an intent model that can't distinguish "reschedule" from "cancel."

What Accuracy Improvements Are Actually Worth: An ROI Calculation

Let's put real numbers on this. Take a medical spa receiving 200 inbound calls per month. Industry data suggests 35–40% of those are appointment requests. At a $250 average ticket, that's roughly 75 booking opportunities worth $18,750 per month.

The cost of improving from 70% to 85% task completion — fine-tuning, intent taxonomy work, knowledge base integration — typically runs $1,500–$4,000 as a one-time implementation cost for a small business deployment. At $2,750/month incremental revenue, that's a 1–2 month payback period. The math is not subtle.

Practical Steps Before You Deploy or Upgrade

If you're evaluating voice AI for the first time or troubleshooting an underperforming deployment, this checklist cuts through the noise:

The Accuracy Floor Is Rising — But Deployment Still Determines Results

The fundamental accuracy of voice AI technology is no longer the primary constraint for most businesses. The models are good enough. What separates a voice AI deployment that genuinely handles 80–85% of calls from one that frustrates customers and gets turned off in three months is implementation quality: domain tuning, intent design, grounding, and disciplined post-deployment iteration.

The businesses seeing real ROI from voice AI in 2025 aren't necessarily using better models — they're using the deployment process more rigorously. They measure the right metrics, they review call failures systematically, and they treat the first 90 days as a tuning phase, not a hands-off autopilot. That discipline is what converts a technology with impressive benchmark numbers into a system that actually handles your customers well. For businesses that want to move in that direction, working with an implementation partner that understands both the technical stack and the operational context — like the team at Epiphany Dynamics — can compress that learning curve considerably.